Top 3 Big Data Transfer Methods in 2025

April 10, 2025

Let's face it, data is huge in 2025. We're all juggling massive files daily, from crisp videos to hefty databases. It's not just about clicking 'send' – speed, security, and reliability are key.

In this post, we'll walk through the top three methods for big data transfer, helping you find the perfect fit for secure, hassle-free transfers.

At What Point Does Data Become 'Big Data'

Again, there's no single magic number, but "Big Data" typically describes datasets that are too large, too fast, or too complex for traditional data processing tools. Here’s how the "3 V's" look with some potential numerical context:

Volume (The Size): We're talking about data quantities significantly larger than what fits comfortably in a standard relational database on a single powerful server.

- This often starts in the range of tens or hundreds of terabytes (TB) and quickly scales into petabytes (PB) or even exabytes (EB).

- (Context: 1 PB = 1,000 TB; 1 EB = 1,000 PB). Traditional tools often struggle significantly past the multi-terabyte range.

Velocity (The Speed): This refers to data being generated and needing processing at extremely high speeds.

- Think of data streams generating thousands or millions of events per second, requiring near real-time analysis (e.g., processing within milliseconds or seconds). It could be hundreds of megabytes or even gigabytes of log data generated per minute by a popular website.

Variety (The Types): This includes a mix of structured, semi-structured, and unstructured data.

- A dataset might include billions of structured records (like sales transactions) combined with terabytes of unstructured user reviews (text), petabytes of images and videos, and gigabytes of semi-structured JSON logs from web servers. The challenge lies in integrating and analyzing these diverse types together.

Method 1: Use Online File Transfer Service [For Individual]

If you need to send heavy files but don’t have enterprise-level needs, online file transfer services offer a quick and easy solution.

Platforms like WeTransfer, Google Drive, and Dropbox enable users to send files up to a few gigabytes with minimal effort.

Follow these steps to use an online file transfer service:

- Choose a service – Select an online transfer tool like WeTransfer or Google Drive.

- Upload your file – Drag and drop your large file onto the platform.

- Generate a link – Once uploaded, the service will create a downloadable link.

- Share the link – Send the link via email or messaging apps to the recipient.

While these platforms are great for quick transfers, they may have file size limitations and slower speeds for extremely large data sharing.

Method 2: Use FTP [Terabyte Size]

File Transfer Protocol (FTP) is a reliable method for transferring large data over the Internet.

It is widely used in industries that deal with massive files, such as media production and IT.

- Follow these steps to use FTP for large data transfer:

- Set up an FTP server – Choose a reliable FTP client like FileZilla or Cyberduck.

- Establish a connection – Enter the server credentials (IP address, username, password).

- Upload your data – Drag and drop files to start the upload process.

- Download on the recipient’s end – The receiver logs in and retrieves the files.

FTP is excellent for sending big data, but it lacks advanced security features unless paired with encryption protocols like SFTP.

Method 3: Use Raysync [Terabyte Size]

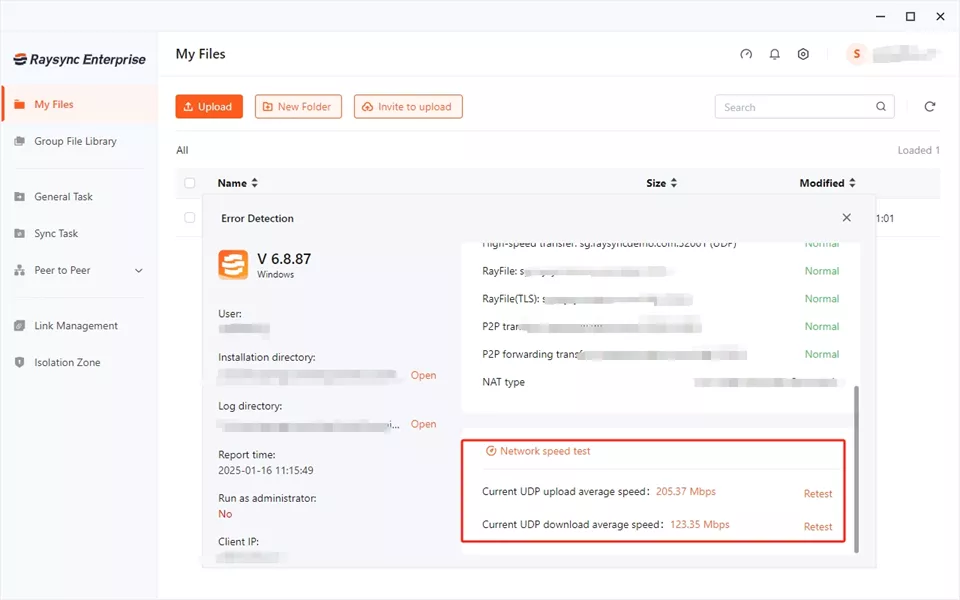

For businesses handling terabytes of data, Raysync is an advanced file transfer solution designed to deliver ultra-fast, secure, and highly efficient large-scale data transfers.

Unlike traditional methods such as FTP, which can be slow and unreliable for extreme data loads, Raysync is built with an intelligent transfer acceleration protocol that ensures maximum speed and security.

Raysync supports multi-channel transmission, optimizing bandwidth usage to reduce latency and improve efficiency.

It also incorporates enterprise-grade encryption and advanced access control, making it a preferred choice for businesses dealing with sensitive information.

With no file size restrictions and an emphasis on reliability, Raysync stands out as the go-to solution for companies that require high-speed, secure, and scalable big data transfer solutions.

Pros:

- High-Speed Transfers – Uses multi-channel acceleration for rapid large data transfers.

- No File Size Limits – Perfect for businesses handling massive files.

- Enterprise-Grade Security – Built-in encryption ensures data protection.

- Cross-Platform Compatibility – Works with Windows, macOS, and Linux.

- Seamless Integration – Connects with cloud storage and enterprise applications.

- Bandwidth Optimization – Smart scheduling reduces network congestion.

Cons:

- Users need a subscription to enjoy its advanced and exceptional features.

Pricing Model:

|

Raysync Cloud |

SMB |

Enterprise |

|

|

Plan Pricing |

USD $99/ Month |

USD $1,599/ Annual |

Tailored Plans |

|

Type of Service |

Cloud |

On-premise |

On-premise |

|

UDP Bandwidth |

1Gbps |

1Gbps |

By license |

|

Transfer/ Download Traffic |

2 TB |

Unlimited |

Unlimited |

|

Storage Limit |

1 TB |

Unlimited |

Unlimited |

|

Maximum Users |

10 |

10 |

Unlimited |

Discussion about Big Data Transfer on Reddit

A recent discussion on Reddit explored the challenges of sending large data efficiently. IT professionals and system administrators shared insights on various transfer methods, from traditional FTP solutions to advanced enterprise tools.

One common frustration was the inefficiency of cloud-based services like Google Drive for large-scale data transfers due to file size limits and slow upload/download speeds.

Several users recommended alternative methods, such as setting up direct high-speed network transfers and leveraging dedicated data migration tools.

As an aside, from a professional perspective, Raysync makes big data transfer significantly more manageable. Feel free to get in touch with us.

The End

Moving big data can be tough. While options like online services or FTP work for smaller tasks, businesses need serious speed and security. Raysync steps up, using smart tech to send huge files quickly and safely. It's a top pick when you need reliable, fast transfers for massive datasets.

You might also like

![Top 5 WinSCP Alternatives [Win/Mac/Linux Supported]](http://images.ctfassets.net/iz0mtfla8bmk/4i4pAVRYood6a8OJ9DI9cD/c5771692cd0e603fb0ef0eb87876bff8/winscp-alternative.png)

Industry news

August 5, 2024Looking for something different than WinSCP for your secure file transfers? Discover our top 5 WinSCP alternatives for Windows, Mac, and Linux.

Industry news

July 24, 2020In the Internet market, more and more enterprises realize that innovative software and mobile applications can be used to gain or maintain valuable competitive advantages, and enterprises must try to integrate "business" with IT departments.

Industry news

December 8, 2022The cross-border transfer must face the ultra-long distance and weak network environment. The high latency and high packet loss rate caused by network problems can directly affect the efficiency of the file transfer.