Training Data Transfer: Multi-Terabyte Dataset File Exchange

March 4, 2026

In 2026, the AI industry faces a paradox. Breakthroughs in model architecture continue apace—yet the foundational challenge remains stubbornly physical: moving the data itself.

The world's most sophisticated neural network is worthless if its training data is stranded on a server in Frankfurt, Singapore, or Silicon Valley with no efficient way to reach the GPU cluster that needs it.

This is the invisible crisis of enterprise AI.

The Insatiable Appetite

Modern foundation models are fed on datasets measured in petabytes.

Consider what AI teams face daily:

-

A single computer vision corpus routinely exceeds 1.2PB

-

Multimodal models require simultaneous transfer of video, text, and sensor data from globally distributed sources

-

Daily incremental updates of 80TB+ are standard in production environments

Yet most organizations move this fuel using rraditional design protocol.

FTP transfers 1TB in approximately five days. At that rate, a 5PB training dataset requires 25 days simply to arrive—before a single training epoch begins.

This is not a technical inconvenience. It is a competitive disadvantage measured in delayed model iterations and stalled AI initiatives.

The Rsync Trap

Many AI teams default to rsync—reliable for its time, but fundamentally incapable of addressing today's requirements.

A Fortune 500 company recently confronted this reality. Tasked with synchronizing massive training datasets across data centers in Germany, Spain, Belgium, and Shenzhen, its rsync-based infrastructure collapsed.

The breaking points:

-

File volume paralysis: With millions of small files, rsync spent more time traversing directories than transferring data

-

Protocol fragility: Multi-day transfers routinely failed with no intelligent resume

-

Bandwidth starvation: Even on dedicated links, throughput never exceeded 50% of capacity

Rsync, FTP, and HTTP were designed for occasional file exchange—not the continuous, high-

velocity data pipelines that modern AI demands.

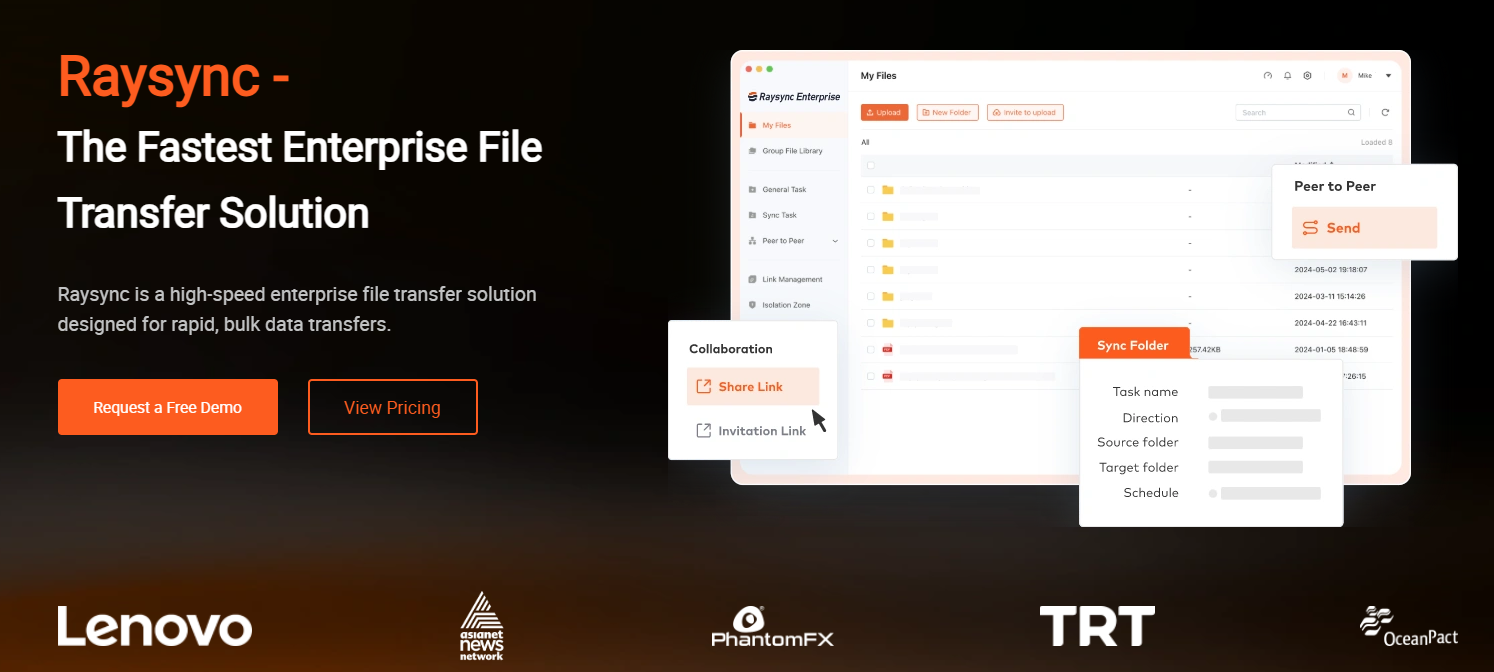

The Alternative — Raysync

Raysync began with a contrarian observation: if the problem has changed, the solution must change with it.

Our proprietary UDP-based protocol renders legacy limitations irrelevant:

-

85% effective throughput even under 50% network packet loss—where traditional protocols treat packet loss as catastrophe

-

96% bandwidth utilization—where FTP struggles to exceed 30%

-

10Gbps sustained throughput, with clustered configurations reaching 100Gbps—where conventional tools measure in megabits

The result is category change.

A 1.2PB training dataset that required 32 days to mobilize now arrives in 4 days. Daily 80TB incremental updates complete in 18 minutes.

This is the difference between aspirational and operational AI.

Security Without Sacrifice

Training data contains raw, unredacted source material—sensor logs, customer interactions, PII.

Traditional transfer forces a Faustian bargain: accelerate unencrypted or encrypt and crawl.

Raysync refuses this compromise.

We implement bank-grade TLS 1.3 and AES-256 encryption as foundational architecture, not optional overlay. Protected data streams without the 60-80% performance penalty of VPN-wrapped legacy transfers.

For enterprises under GDPR, HIPAA, this is compliant acceleration versus compliance-driven paralysis.

The Hidden Cost of Manual Intervention

When a 20TB training corpus fails at 3:00 AM—as FTP-based transfers reliably do—someone must detect, restart, and monitor. Organizations using conventional tools report 72% network-induced interruption rates for long-haul transfers.

Raysync eliminates this tax through automation:

-

Scheduled policies execute without human intervention

-

Incremental change detection captures modifications at millisecond granularity

For AI engineering teams, this is the difference between managing pipelines and building models.

The Integration Imperative

No AI organization operates from a clean sheet. Existing investments in S3, Azure Blob, and local NAS are permanent realities.

Raysync delivers protocol-agnostic compatibility across Windows, Linux, macOS, iOS, and Android, with native connectors for every major storage platform.

More critically, our SDK and API interfaces enable embedding high-speed transfer directly into model training pipelines and MLOps orchestration.

This is not a standalone tool. It is a data transport substrate upon which AI infrastructure is constructed.

Conclusion

In 2026, the AI community confronts an uncomfortable truth: software intelligence is outpacing physical logistics.

We can architect models of unprecedented sophistication. But we cannot outrun the laws of physics. Data must still traverse fiber. Packets must still cross oceans. And when terabytes become petabytes, the protocol stack becomes the primary constraint on scientific possibility.

Raysync does not claim to have repealed these laws. What we have achieved is more valuable.

The enterprise that deploys Raysync does not merely transfer training data faster. It fundamentally reimagines the relationship between data location and model development. Geographic distribution ceases to be a liability. Dataset size ceases to be a deterrent.

Contact Raysync today for a customized proof of concept with your actual training datasets.

You might also like

Industry news

November 27, 2024Find the best fast file transfer software for PC free download! Discover tools for blazing-fast transfers, secure backups, and seamless data migration for individuals and businesses.

Industry news

June 21, 2024Explore four simple methods to know how to send high quality videos without losing clarity.

Industry news

August 19, 2025raysync vs transfernow, enterprise file transfer, secure large file sharing, high-speed file transfer solution, file transfer for businesses